Clinical Trials Were Not Always This Complicated

How trials got so bureaucratic, and how some leaders pushed back

Today’s clinical trials are deeply inefficient, a topic I’ve covered extensively in my writing. I’ve talked about inefficient practices, like the industry’s continued reliance on paper and manual transcription of data. I have also documented how rising costs–increases of 10% a year–have made trials harder to run. I’ve also attempted to diagnose some of the causes: perverse incentives in the drug industry, a fragmented industry, and deep risk aversion.

But there’s also an important story about how the clinical trials industry got this way. Trials used to be far less bureaucratic, and many of the practices we take for granted in trials, like monitoring and source data verification, only became standardized in recent decades. The story of how trials transformed is a case study in how regulation can reshape an industry–and it can help inform today’s debates over how trials need to change.

The story I’m about to tell is most often recounted by a group I refer to as the “trialists”: the academic leaders who helped design the most important large-scale clinical trials of the 1980s and 1990s, and who continue to influence the debate over clinical trials today. To these trialists, today’s clinical trials industry exists in a kind of fallen state. In their account, the 1980s and 1990s represented an efflorescence in the science and practice of trials: we proved we could run trials at unprecedented pace and scale— and at remarkably low cost. Trials seemed poised to answer many of our most important clinical questions. Then came a decline: in the 1990s, the trials industry underwent a transformation that left it corporatized, bureaucratized, and diminished in ambition.

Here, I’ll recount the trialists’ story. I want to explore what that story gets right, what it misses, and what it tells us about the prospects for real reform.

The Rise of Large Trials

Within the story of clinical trials, there are two narratives running side-by-side. One is a story of continued scientific progress. The modern clinical trial is more than just a form of scientific experiment: it is a kind of truth-finding technology, refined and improved over decades, that has helped us unlock life-saving new treatments and improve care. Since the creation of the modern trial in the 1950s, the clinical trial has undergone a series of reforms that have cemented its status as the “gold standard” for clinical evidence.

But alongside the story of scientific progress is one of operational stagnation. Even as the science of trials advanced, the business of trials – sometimes referred to as the “trials enterprise” – has struggled to produce the evidence we need to develop new treatments and improve care. Most significantly, we have lost much of our capacity to run simple trials, cheaply, at scale.

To understand how this happened, we need to retrace the story of the trials enterprise. (If you’re interested in learning more about this story, I recommend this piece by Alexander Janaroff, which was a key source for this post, as well as FDA’s own history.)

Today, the drug industry’s trials are a big business; most trials are sprawling enterprises run by large organizations across multiple sites. But before this modern system of clinical trial research was developed, drugs were largely tested in an ad-hoc way by independent clinician-investigators. In the first half of the 20th century, the quality of those investigations could be mixed. At their best, investigators made genuine efforts to control their experiments, taking care to make adequate and unbiased comparisons between patients who took the drug and those who did not. At their worst, they relied on what can best be described as “vibes”. Drug companies might ask clinicians if the drug appeared to work, and use testimonials to justify their product’s safety and effectiveness. We had relatively few standards for what good, unbiased clinical research ought to look like.

Over time, however, the standards for research tightened, particularly by the 1950s as the principles underlying modern trials were codified. That process was pushed along in the 1960s as the FDA started systematically reviewing drugs’ efficacy. But even well into the 1960s, as scientific rigor increased, the fundamental operating model of the trial hadn’t changed.

To test a drug, a company would typically send samples to clinician investigators, who would take responsibility for figuring out whether the drug worked. FDA’s own statutes and regulations still reference this model: they stress the importance of bringing on “qualified investigators” to study drugs. The idea that trials might not be run by individual investigators but rather by large organizations across multiple institutions was relatively new and did not fit well with FDA’s model of regulation (a problem that persists today, incidentally, despite some subsequent regulatory changes).

In contrast to the large, multi-center studies that researchers were running as early as the 1940s, most early investigator-led studies were small, limited to just one institution. That created a problem: a single investigator in a single academic institution could study only so many patients. By the 1960s, it had become clear that these small studies were inadequate to the task of studying the latest drugs. The drugs themselves had changed: many drugs developed in the postwar period, such as antibiotics, had dramatic and obvious effects that were noticeable in small studies. By the 1960s, newer classes of drugs, including cardiovascular drugs, arose. Researchers and regulators were interested in studying these drugs’ effects on “hard” endpoints - like death, heart attack, and stroke - rather than just measuring their effect on biomarkers. These hard endpoints were observed relatively infrequently (fortunately, heart disease patients usually live for quite a while before succumbing to their illness).

These new drugs required a different approach to running trials. Given how rare these hard outcomes were, researchers realized that their studies would need to be far larger if they wished to detect an impact on mortality in a reasonable timeframe. In the 1970s, modern methods of study size calculation, advanced by researchers like Richard Peto, emphasized that the efficacy of many drugs could only be determined if thousands of patients were studied.

This realization led to the creation of a new approach to running studies, the “large simple trial.” In a pioneering paper by Salim Yusuf, Rory Collins, and Richard Peto, the authors described what this kind of trial should look like. First off, it should be very large: powered to detect small changes in mortality. That would require them to be run at many sites, each adhering to the same protocol. The authors emphasized that the protocol should be simple, and not too burdensome. They wrote: “Many clinicians involved in the management of patients are already overworked, and in practice a really large-scale trial is likely to succeed only if it adds little or nothing to their existing workload.”

The authors also stressed that simplicity need not sacrifice rigor or accuracy. In fact, simple protocols with simple treatment regimens would help improve patient and researcher compliance. While proper randomization was absolutely vital, other aspects of the trial might even vary a bit between study sites without biasing the results. As long as randomization was done properly, some “noise” should not threaten the validity of the trial. The largest trials of this era reflected this philosophy. One of the first large simple trials, the first of the “International Studies of Infarct Survival” (ISIS-1), which started in 1981, only required researchers to submit a 1-page form with patient data and had no monitoring or endpoint adjudication.

While these large simple trials never made up more than a small portion of overall trial activity, they had a major impact on the field. For example, the second ISIS study randomized over 17,000 patients and showed that streptokinase and aspirin used together could significantly improve the survival of heart attack patients. Trials like the ISIS studies and GISSI (an Italian study of heart attack treatments) each enrolled tens of thousands of patients, and their findings transformed care for heart attack patients and saved many lives. More broadly, the principles espoused for large simple trials–the importance of rigorous randomization, sufficiently large study sizes, and simple protocols–became important general principles of good study design.

The large simple trials also transformed the trials industry. Their rise led to the creation of a new kind of organization, the “Academic Research Organization” (or ARO): university-based organizations that specialized in running large multicenter trials, also sometimes called “megatrials,” for both academia and industry (examples include the Duke Clinical Research Institute and Oxford’s Clinical Trials Unit). The rise of the megatrial helped build the reputation of a number of renowned trialists who remain influential in the field today. This group included Peto, Yusuf, and Collins, as well as Robert Califf (who would later become FDA commissioner), and Martin Landray (who would later become famous for leading the RECOVERY trial during COVID).

Transformation and Industrialization

With the growth of megatrials, the trials industry reached a critical pivot point. As early as the 1980s, industry had begun to demand more trials, more control, and more speed than university researchers and their AROs could provide. That led to the growth of a new kind of organization: the private contract research organization (or CRO). Over time, CROs displaced AROs as the primary operators of multi-site clinical trials. They were able to keep pace with rising industry demand, and even expanded those trials’ reach, extending their operations globally.

The rise of the CRO coincided with–and was facilitated by–another important development: the establishment of global good clinical practice (GCP) guidelines and intensified regulatory oversight over trials. While FDA oversight over clinical trials began in 1962, the 1990s GCP guidelines ushered in a new era of regulation. Trials began to face closer regulatory scrutiny, and monitoring and documentation began to increase.

These two developments reinforced each other. CROs had the organizational capacity to adapt to the GCP guidelines and help drug companies meet them. In turn, the heightened oversight under the new GCP guidelines gave FDA greater confidence in the ability of CROs to manage trials. Prior to this, there was an expectation that an impartial, unbiased trial should be run by academics–not by the private sector.

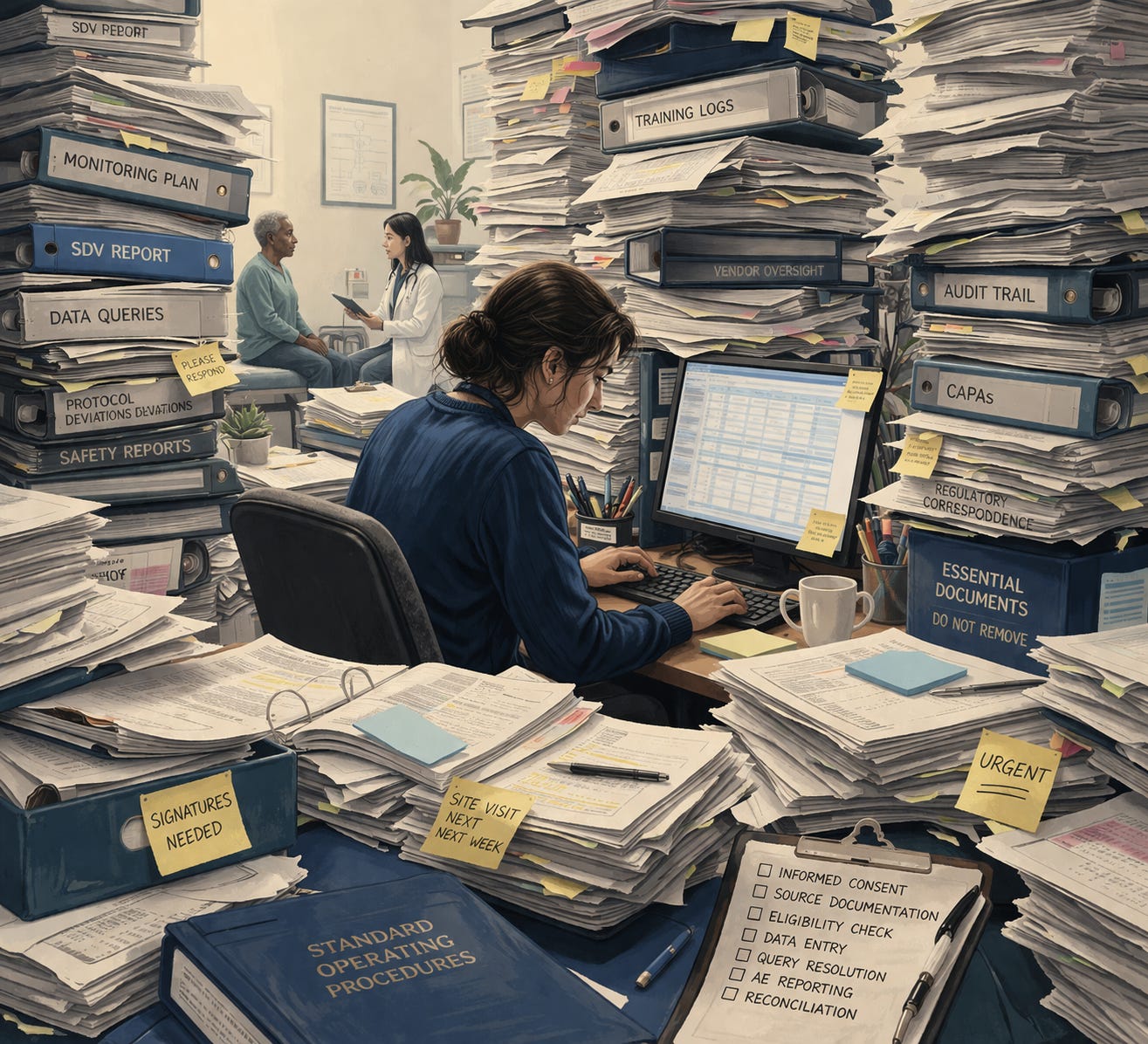

By the 2000s, CROs were running the majority of research; the relative importance of academic medical centers and AROs had greatly declined. In 1992, CROs earned just $1 billion, but as of 2024, CRO revenues are estimated to have reached nearly $60 billion. Nowadays, they are truly gigantic organizations: the biggest CROs, such as IQVIA, ICON or Parexel, each employ tens of thousands of people and support many hundreds of studies every year - far larger than the AROs ever were. This transition changed the nature of the trials industry. Trials were industrialized, at least, in part (I’ll argue soon that this industrialization was incomplete), and were conducted at a larger scale than ever before. Trials were also bureaucratized: the rise of the CRO coincided with the rise of the extensive system of monitoring, auditing, and documentation that defines today’s trials.

Meanwhile, the large simple trials pioneered by the leading trialists had begun to decline, driven by several factors. Funding had fallen off: the pharmaceutical industry still funded some trials run by AROs, but academic medical centers cut back their own research budgets under increasing financial pressure. Meanwhile, NIH, which had played a crucial role in shepherding the rise of the large simple trial, cut back their support for trials too. The decline in larger-scale confirmatory trials was especially stark: NIH-funded phase 3 trials fell from 230 in 2005 to 62 in 2015.

There was also a shift in interest – driven in part by evolving science. By the 2010s, biomarker-based research and precision medicine would come to dominate the drug development landscape. Drug developers moved away from large-scale cardiovascular trials as they pursued products for narrower uses tested in smaller studies.

But a crucial change reinforced–and perhaps even drove–these other trends: increased bureaucracy. In an effort to comply with regulatory requirements, drug companies and CROs added additional procedure, complexity, and bureaucracy into their trials. As bureaucracy increased, costs rose. Even publicly-funded trials and academic-funded trials were affected, since both academic and industry-led trials were run by the same staff in the same sites. Rising costs made it difficult for academic medical centers and NIH to continue to fund large trials. Even the drug industry lost interest in large trials as they grew more expensive.

The end of trials’ golden age

To the trialists, the decline of the large simple trial might have felt like the end of a golden age. The trials enterprise that had given birth to large simple trials was gone, replaced with something more bureaucratic, more expensive, and less capable of answering the questions that actually mattered to doctors and patients.

The trialists directed sharp criticism at the FDA and other drug regulators’ “Good Clinical Practice” (GCP) guideline. Published in 1996 by the International Consortium on Harmonization (ICH), a global consortium of regulators, the guideline was intended to serve as a global standard for how regulated clinical research should be conducted.

The trialists lodged many complaints against the guideline, both general and specific. On the specific side, they pointed to a number of seemingly wasteful practices encouraged by the guidelines: expensive on-site monitoring of study sites, verification of every transcribed data element, extensive and exhaustive reporting of even irrelevant adverse events, and time-consuming documentation to support inspections and audits.

More broadly, research leaders argued that the GCP guidelines–unlike the clinical guidelines issued by professional societies–were created with no scientific input, had no logical basis, and had no evidence backing their recommendations. Moreover, the guidelines’ entire emphasis was wrong: rather than promoting the good scientific practice that had been espoused by the designers of the large simple trials, they emphasized extensive documentation, auditability, and a nitpicking approach to data quality that had little bearing on the overall validity of the study.

Worse yet, the regulations were applied too broadly. The GCP’s scope was initially limited to regulated research–that is, research whose results had to be submitted to regulatory agencies like the FDA. Gradually, however, the ICH GCP’s scope expanded: in the EU, the ICH guidance was required to be applied to all research. In the US, the guidance’s legal status is “non-binding” but, in practice, it too is applied to all clinical research conducted in the country. The leading trialists and academic researchers decried this change; likening it to a “straitjacket” on their research.

If the guidelines got most of the blame, the CROs and the drug industry came in for a close second. They interpreted FDA’s requirements in a maximally risk-averse way. Then their interpretations, reinforced by a fear of FDA inspections, created a stifling environment that drove up costs and bureaucracy at the expense of research quality.

There was an even darker edge to this concern. As several leading trialists presented suggestions to streamline trials, they feared opposition: “Certain entities have benefited from the complexity of the current regulatory environment — not just contract research organizations and companies providing training in the ICH-GCP guidelines, but also regulatory groups in pharmaceutical companies and other institutions, which have seen their revenue and influence increase substantially — and they too may oppose streamlining.” This view was part of a broader concern; that a kind of compliance-industrial complex had emerged, in which regulators, the pharmaceutical industry, and the contract research organizations shared an interest in retaining an elaborate and risk-averse bureaucracy.

As the trialists lodged their complaints, the pillars of the large simple trials movement were toppling, one by one. The trialists advocated for trials that were simple, large, and rigorously randomized. The industry was moving in the opposite direction. Trials were growing more complex, and CROs and the drug industry managed that complexity with tighter procedures and more layers of bureaucracy. This made large trials prohibitively expensive, so industry shifted its focus to smaller trials for complex biomarker-driven indications, and advocated for regulatory reforms that could make the trials even smaller and shorter. The last pillar to fall was randomization itself, as the drug industry encouraged the FDA to accept observational studies instead of trials. To the advocates of large simple trials, this was anathema; industry could more easily imagine doing away with trials altogether than making them simpler and cheaper.

The Response to the Backlash

The trialists pushed back hard against these trends the best way they know how; through the institutions of science. In the 2000s, a series of journal articles and commentaries came out with titles like: “Randomized clinical trials: Slow death by a thousand unnecessary policies?”; “The Good Clinical Practice guideline: a bronze standard for clinical research”; and the more measured (but equally critical) “Clinical trials bureaucracy: unintended consequences of well-intentioned policy.” Over time, they would launch coalitions, host convenings, and lead scientific roundtables aimed at reversing the tide of bureaucracy in trials.

While these sorts of articles and convenings may have limited impact on the broader public discourse, they matter a great deal to FDA and other regulators, who depend on the support of scientists and academics. So as the criticisms mounted, the FDA engaged with the critics. In 2007, they partnered with Duke University to found the Clinical Trials Transformation Initiative (CTTI), aimed at making trials better and more efficient. The initiative’s founding CEO Judith Kramer, articulated the problem: “The investment in time, money, and human resources for clinical trials has been rapidly increasing in recent years, without a commensurate increase in the number of new products entering the market place. There is a growing consensus that clinical trials are inefficient and too costly.”

In the years that followed, FDA, informed by the work of CTTI and the trialists themselves, issued new rules and guidance that tried to address the most egregious examples of bureaucracy and waste. For example, in 2010, FDA published a rule limiting how often drug companies needed to submit expensive and time-consuming “expedited safety reports” during their trials. In 2013, FDA issued guidance clarifying that sponsors did not need to manually verify that every data element collected in the trial was accurately transcribed – a practice called ‘100% source data verification’.

Yet in each case, the rules were undermined by cautious, risk-averse interpretations. After the finalization of the 2010 safety reporting rule, CTTI found that expedited reports submitted to FDA actually increased. Likewise, years after FDA recommended against routine use of 100% source data verification, the vast majority of trials still adopted the approach. Similar efforts at reform, including regulations aimed at simplifying informed consent forms and reducing the scope of IRB review also had limited impact.

Despite these setbacks, efforts at reform continued, culminating in the 2025 issuance of the revised good clinical practice guideline. This guideline was explicitly designed to respond to the criticisms leveled by trialists. It cautions against overly complex trial protocols, and pushes hard for a “risk-proportionate” approach to tasks like study monitoring. Not only is the reform comprehensive, but–unlike the FDA-driven reforms that preceded it—the ICH guideline is global, raising hopes for more widespread adoption. But even as the guidelines were being developed, trialists expressed concerns that over-cautious interpretations of the guidelines could limit their value.

“Back to the Future”: Reviving Large Simple Trials

Amid the backdrop of regulatory reforms, another important story was taking shape: an ongoing effort to revive the large, simple trial; or at least, to bring back some of its key principles. No story more exemplifies the power – and limits – of this approach than that of the COVID-19 RECOVERY Trial.

At the center of this story was Martin Landray, one of the advocates of simple trials we mentioned earlier. For years, he had helped design and run large simple trials alongside his colleagues at Oxford’s Clinical Trial Service Unit (CTSU) – an organization that had pioneered the concept of large simple trials. As trials grew more complex and bureaucratic, he was one of the loudest voices in favor of reform, and led several of CTTI’s efforts to reform and simplify trials. He even launched his own alternative trial guideline, “The Guidance for Good Randomized Clinical Trials” as a scientifically-grounded complement (and perhaps alternative) to the documentation-heavy and bureaucratic GCP guideline.

With the spread of COVID in early 2020, Landray had a chance to prove that a streamlined trial could be used to identify effective treatments for COVID. Before the pandemic had reached the UK, he had already begun to design the trial that would later become RECOVERY. He borrowed heavily from the large simple trials playbook: a one-page case report form, simple criteria for eligibility, and a simple protocol that could readily be administered at scale. But he modernized the approach, using web-based forms to collect data, an adaptive platform design to compare multiple treatments, online training for study sites, and the use of additional data collected from NHS’ electronic health record systems. It cost just $500 per patient.

The streamlined design allowed the trial to quickly recruit patients and produce results. Within just a few months, RECOVERY had enlisted every acute hospital in the UK (along with sites in many other countries) and had identified life-saving treatments for COVID. Those treatments quickly became part of standard COVID care in hospitals, saving millions of lives.

Like the 2025 good clinical practice guidelines, the success of the RECOVERY trial felt like a turning point. Landray and his fellow trialists had spent decades arguing for a return to streamlined trials, and, in this moment, they seemed to be vindicated. If RECOVERY’s streamlined and broad-based approach could work for COVID, surely the model could be adopted for other diseases.

In fact, RECOVERY was part of a broader movement, pushing for new kinds of trials that could recapture the benefits of those older, large, simple trials. The trialists have outlined what those new trials might look like. Like the old trials, they would be streamlined to impose minimal additional burden on study sites. But unlike those old trials, these new trials could leverage the data in electronic health records to streamline trials even further and collect more useful data. Their reach could be broader too: “point-of-care” trials could be run in community settings outside of academic medical centers, and decentralized trials could be carried out remotely through telemedicine. These newer trials could build on the strengths of simple trials while creating something better and more efficient.

But these new kinds of trials face huge obstacles. The RECOVERY trial was run in a COVID state of exception. It’s not clear whether other trials of similar scope and ambition would be possible today, absent a major public health crisis. Nor, it seems, was such a trial possible here in the United States. Our own efforts to run large-scale trials during COVID were largely unsuccessful. Researchers continue to find ways to run streamlined trials, but they remain a very small part of the research enterprise.

Why large simple trials still matter

Today, the problem of clinical trial inefficiency and bureaucracy feels more urgent than ever. There is newfound energy and enthusiasm around doing something about the problem. Amid that backdrop, many of the debates that preoccupied the clinical trialists are playing out again: we are asking ourselves how we can make trials more efficient, more streamlined, and less bureaucratic. That makes this a good time to assess what we can learn from the past two decades of efforts at trial reform.

It’s clear that, for all they have achieved, the trialists have not yet been able to address their most important goal: to make trials significantly simpler and less bureaucratic. While the regulations have improved, the trials’ costs and complexity have only risen. Even if we stemmed the tide of bureaucracy, there is probably no way to return to the trials enterprise we once had.

But if we can’t go back to the heyday of large simple trials, the good news is that we wouldn’t necessarily want to. We should acknowledge the accomplishments of our current industrialized bureaucratic trials system. The industry is vastly larger than it was in the 1980s “golden age”, and our capacity to learn from trials has never been greater. We’re running more trials across more sites and more countries, for a broader range of diseases. And despite the greater size and complexity of the trials, we retain confidence in their rigor and validity. Thanks to the rigor of our system, FDA’s leaders were able to make the argument that we only need one trial, instead of the previous two, to demonstrate a drug’s effectiveness.

The challenge, then, is preserving the benefits of today’s trial system while streamlining it and reining in its excesses. For that reason, we should keep learning from the era of large simple trials. The principles behind those large simple trials are still valid and deserve to be more widely known and followed. And more importantly, when we study the history of trials–and the work of pioneering trialists like Martin Landray–our minds open to new possibilities. We learn that there is nothing inevitable about the way our clinical trials system works today: it especially does not have to be so complex and bureaucratic. We’ve transformed the trials system before, and it can be transformed again.

Great post Adam! Love to see more people looking to the past to improve clinical trials. I'm curious to get your take on how the evidentiary bar might have changed over time. Is it possible that drugs approved previously wouldn't be up to stuff today? And is there a way to think of drug approval rates in "real" terms?

“What’s the smallest incentive change that would actually move behavior? (e.g., inspection expectations, sponsor–CRO contracting, liability, insurer requirements, journal norms?)” And is there a similar structure for medical device testing?